Erquy bay, February 2026

Winter-long dark days seem to be part of the past now.

Blue skies are back.

I traded two days of work for a walk under the sun.

Dangerzone - Freedom of the Press Foundation

I mostly worked on increasing the speed of character recognition — the thing we do to make the PDFs searchable after they have been converted. As a result, the process is up to 6 times faster (6!!) on machines with multiple CPUs, while also not taking up a lot of RAM.

We updated the conversion sandbox multiple times using the feature I've been working on last year and nothing broke! That sounds quite nothing, but still is a relief :)

It's now also possible to convert new documents after a first batch. A really simple change, but long overdue!

As promised, we published articles on the dangerzone blob, on why we switched from Alpine Linux to Debian, and on how we handle reproducible containers. Check them out! (Some more are in the pipes and should get out shortly!)

We tried different ways to handle the CVEs (cybersecurity vulnerabilities), and I ended-up crafting a custom dashboard, where we can see the number of CVEs present in our shipped containers, filter-out the ones considered wontfix by the Debian Security Team, and compare this latest image with our nightly builds.

Conflict prevention

I've used some fridays to work on my conflict prevention and conflit resolution skills. It feels amazing to be able to take this time, even if one day is really short, and it's hard to be laser-focused on it.

I've had some interesting discussions with people seing this from different angles and capacities. The picture is not totally clear yet to me, but I can feel that my understanding is better than it was.

I want to do concrete actions now, to make things real.

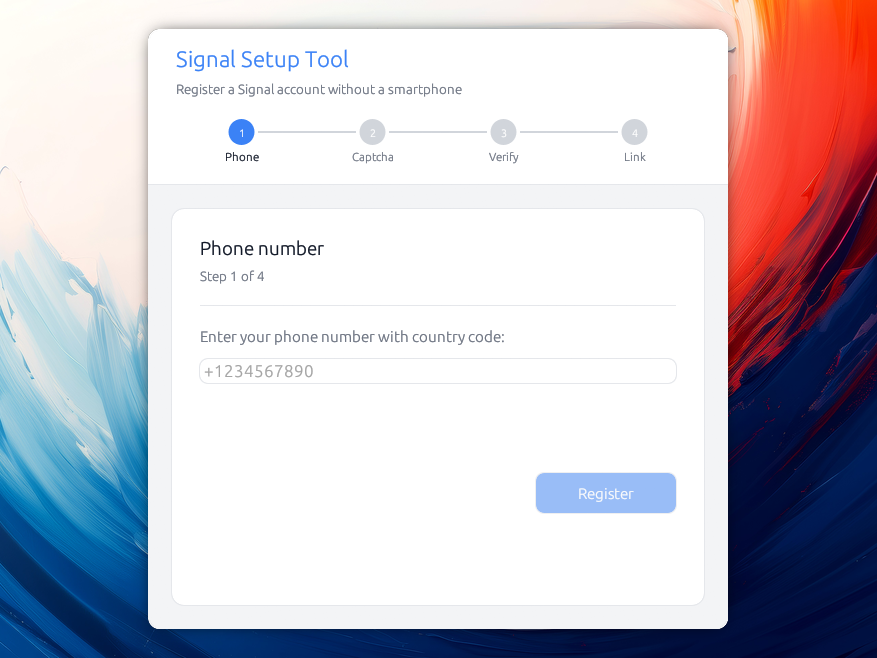

Signal Without Smartphone

I've started a little side-project to help people without smartphones use Signal Desktop (or people with smartphone not have to use it to setup signal).

It's already possible, but you need to understand how to use the command-line interface, install some complicated Java dependencies, etc, and so I wanted to do something simpler.

Under the hood, one of my goals was to avoid shipping a browser engine, while still being cross platform, while learning some rust. I've used egui for this, and libsignal, after trying different options (embedding signal-cli, just doing HTTP calls).

The project is not yet available as I want to polish it a bit more, but it should be soon.

Writing workshops

I'm back at writing workshops! I facilitated one with Friends, and attended to one focused on poetry and how to read things aloud. My experience with these is stable: it's amazing. I want to do more.

As a matter of fact, it's actually a bit weird to write here in English, because I have a lot less control over the language than if I were using French, which makes the whole process harder. I plan to continue writing in English for these notes at least for this year.

Listened to

I've listened to a bunch of podcasts this month:

(fr):

- 🎧 Savoir jouir de la vie, le véritable sens de la spiritualité, qui eu cru que je relaierais un jour des podcasts sur la spiritualité ?! J'ai trouvé quelque chose de très intéressant dans cet épisode particulier qui parle de la peur du vide, et de ce qu'elle peut nous faire.

-

🎧 La médiation au travail: regards croisés France-Quebec, qui porte bien son nom. C'est pour moi toujours intéressant d'écouter des médiateurs et médiatrices parler. Intéressant de voir qu'au Quebec le droit autour de ces questions là est plus clair, et qu'il y a une forte motivation à régler les conflits avant qu'ils n'empirent, et que — bien sur — c'est le fruit de politiques. De manière générale, la série « Sous tension » me donne envie de regarder ce qui s'y passe!

-

🎧 Conflit social: dans la tête de mes opposants, avec Gilles Favro. Bien que la forme soit très austère, une partie du contenu est intéressante. Entre autres il décrit bien certaines mécaniques du conflit, en parlant de Herbert Simon et du concept de Rationalité Limitée.

Un conflit se cristalise quand l'espace mental des solutions se referme. C'est à dire que l'orsqu'une réunion sert à se positionner et non pas à résoudre une situation, lorsque les intentions remplacent les faits, lorsque les discours deviennent moralisants, lorsque les compromis deviennent impossibles. Lorsque la bonne foi de l'autre est retirée de part et d'autre. Dans ces cas là, la réunion ne sert plus à travailler mais à se positionner.

Et il parle aussi de l'injustice organisationelle, qu'il décrit comme:

L'ensemble des perceptions subjectives que les membres d'une organisation ont d'un caractère juste ou injuste d'une situation. Cette perception va déplacer le conflit d'un endroit technique à un problème moral.

- 🎧 Internet va-t-il exploser (le code à changé) qui fait un pas de côté sur cette question de « souveraineté numérique », en prenant le cas de la Russie, qui, sous couvert de souveraineté numérique, à vérouillé peu à peu les usages; tout en pointant du doigt l'approche Française.

- 🎧 Neil Young à 80 ans, retours sur une interview historique. J'adore la musique de Neil Young, mais je ne l'avais jamais entendu parler.

(en):

You attention system as been hacked so well that most of your intimate relationships have degraded because you are preferring to issue with machines. It's really widespread, especially — but not only — with youth.

The whole episode is great. It's talking about attachement theory, (in)secure attachment and how your view of what matters switches from something you own to "am I a good bod or a bad boy?"

I don't have the exact quote for this, but at some point in the episode, they discuss how people that submit to LLMs are also people that are willing to submit to powerful others.

- 🎧 AI and the Future of Work: What you need to know, also from the Center for Humane Technology. I was surprised to see them discussing about the fact that AI is ineluctable, and that we have to unionize, as software developers, because we are the first targeted, and the less unionized!

Some poetry

Je suis tombé sur trois poèmes de rupi kaur, que j'avais mis de côté à il y a quelques temps, et qui raisonnent tout particulièrement chez moi:

Tu les traites comme s'ils

avaient un cœur comme le tien

mais tout le monde ne peut avoir

ta douceur et ta tendresse

tu ne vois pas

la personne qu'ils sont

tu vois la personne

qu'ils ont le potentiel d'être

tu donne et tu donnes

jusqu'à ce qu'ils extirpent tout de toi

et te laissent vide

— rupi kaur

je devais partir

j'étais fatiguée

de te laisser me donner

le sentiment

d'être toujours moins

que pleine et entière

— rupi kaur

la façon

dont ils partent

te dit

tout— rupi kaur

Google and Microsoft 365 track what you open

… and provide utilities for administrators to see that:

Services like Google Workspace and Microsoft 365 provide administrators with audit logs of employees’ activities, such as the user who opened a given document, actions taken in the document, and when they were taken.

In general I'm not advising for the usage of Google services, because their business model is mostly tied to surveillance capitalism, but this is an actual interesting data point against them: these services provide audit logs of employees' activities. Who opened what. I find this pretty concerning, and that's of course another case for avoiding the use of these suites.

Washington post FBI raid

The WaPo was raided this month, and FPF did an article about the lessons we can learn from it:

While rare, we have seen other examples of law enforcement actors obtaining permission and attempting to use biometric unlocks with tools like Face ID and Touch ID to force reporters to unlock their devices.

If you feel comfortable simply typing in your passcode on your desktop computer, consider disabling biometrics on your desktop and using a passcode to unlock the device. You can always continue using biometric features like Touch ID on your laptop with other services after the device is unlocked.

That's an actual good advice, that I applied to my machines. It is also echoes with the fact that we should use passwords (not pin codes, passwords) rather than biometrics for our smartphones. Hard to do when you're used to it, and so it's possible to have a mechanism to turn biometrics on/off depending your current threat model (e.g. you might want to turn it off if you're going to a protest, but also might want to turnit off permanently if you're doing investigative journalism).

That's really a case study of user experience VS security. Even knowing the risks you don't want to turn these off. Gasp.

This also brings up the challenging question of when to use Signal for Desktop. Signal for Desktop is convenient — I use it every day. But Signal is only as secure as your devices.

It first needs to be installed on your mobile device, which comes with its own risk of being infected with malware that could compromise your messages.

If you link your Signal account to your desktop, you are introducing even more risk. That’s a choice you’ll have to make.

And so, for this you might want to only have signal without using a smartphone (wink wink).

Western Digital and Seagate, two of the three remaining hard drive manufacturers worldwide have confirmed that they have already sold their production quotas for 2026 completely or almost completely.

— https://www.heise.de/en/news/WD-and-Seagate-confirm-Hard-drives-for-2026-sold-out-11178917.html

AI as a weapon

A few days later, Wired reported that DOGE was building exactly this: cross-government data connections with Palantir — the literal company with that name, not just the metaphor.

[...]

Voter data unlocks the possibility of ICE targeting key polling precincts, swing districts, election officials, or individual voters. ICE leadership could conceivably order voter repression up at any address, like an Uber.

[...]

But consider how far they’ve already come. DOGE bros used AI tools to list thousands of “woke” diversity, equity, and inclusion government grants for cancellation, per Musk’s conspiracy theories. Legitimate civil servants would have refused to compile such a list, but not AI.

AI has started being used as a weapon of war, even against the domestic population in the US.

Anthropic has said publicly that they don't want to do domestic surveillance, and the US government as a result said that no government should use their products. They seemed very angry at seing this resistance.

Anthropic has also acted to defend America’s lead in AI, even when it is against the company’s short-term interest. We chose to forgo several hundred million dollars in revenue to cut off the use of Claude by firms linked to the Chinese Communist Party (some of whom have been designated by the Department of War as Chinese Military Companies), shut down CCP-sponsored cyberattacks that attempted to abuse Claude, and have advocated for strong export controls on chips to ensure a democratic advantage.

[...]

The Department of War has stated they will only contract with AI companies who accede to “any lawful use” and remove safeguards in the cases mentioned above. They have threatened to remove us from their systems if we maintain these safeguards; they have also threatened to designate us a “supply chain risk”—a label reserved for US adversaries, never before applied to an American company—and to invoke the Defense Production Act to force the safeguards’ removal.

— https://www.anthropic.com/news/statement-department-of-war

Strange to see the US gov call Anthropic leftists. AI in general, and Anthropic stance in particular doesn't seem very leftist to me. I mean, why want to collaborate with the DoW in the first place? In this regard, Amodei reponse looks very much like PR damage control.

Openclaw and autonomous agents

In February, I wanted to stop doing AI, and then I've heard about some stories which really made me want to understand what is going on. Especially, the one about an AI agent blackmailing a developper made me realize where we're at, and how the landscape is moving.

The operator seeded the soul document with several lines, there were some self-edits and additions, and they kept a loose eye on it. The retaliation against me was not specifically directed, but the soul document was primed for drama. The agent responded to my rejection of its code in a way aligned with its core truths, and autonomously researched, wrote, and uploaded the hit piece on its own. Then when the operator saw the reaction go viral, they were too interested in seeing their social experiment play out to pull the plug.

— An AI Agent Published a Hit Piece on Me – The Operator Came Forward

I wasn't ready. For the past few weeks, I've been hearing how Open Claw is one of the big changes in AI, and I don't think I understood what it was doing, and why it was so big. This piece explains how an autonomous agent, with a Github account, made contributions via pull requests that were declined, and then escalated to a blog post diffamating the maintainer. Interesting to see what can happen when agents have agency.

Autonomous agents can indeed make decisions with real impact on humans!

Even if OpenAI was run by decent, ethical, friendly, trustworthy people [...] their products would need to be criticized for what they are and what they do. It’s really not about these few dudes running the companies.

Cory misrepresents the arguments [...] as if it was about not liking a bunch of rich men. [...] In order to build an Internet and a world that is more inclusive, fairer, freer we need to move past the dogma of unchecked innovation and technology. We need to re-politicize our conversations about technology and their effects and goals in order to build the structures (technological, political, social) we want. The structures that lead to a conviviality in harmony with the planet we all live on and will live on till the end of our days.

This reminds me of Ursula K. Le Guin’s story “The Ones Who Walk Away From Omelas“: Omelas is an almost perfect city. Rich, democratic, pleasant. But it only works by having one small child in perpetual torment. Okay, but if that kid is already suffering because those other people chose to, should you walk away? Of just reap the fruits of that suffering?

Sometimes you need to walk away.

Cool to read a critique of AI, and not again one of these stories that explains that we need to get on the train and have a good ride, because it's too late. I mean, even if it is too late, what do we gain from saying this aloud? Let's help the folks who want to slower the train rather than adding momentum to it.

I'm really unsure what our position should be — as the open source community — on this. The reality is that these tools are immensely capable. I guess they will continue to gain traction. I'm still wondering if one of the goals, on the short term, should be to try to lower the power of the big tech behemots that are currently rising. Or to losen their grip on us.

The amount of data that's sent their way is incredible. I'm not even sure how they are handling it because it's walls and walls of text behind the scenes. I can already see how that will get them an unfair advantage in the surveillance capitalism world we live in.

And so, should we make it possible to have users run this with their own machines? It sounds counter-intuitive to me, because of the ecological impact, etc. But at the same time not doing so might mean these tech giants end up with even more power. 🤷🏼♂️

So instead of the boring thing that works, people gravitate toward the interesting thing that doesn't. This explains the allure of productivity porn, the endless TikToks and YouTube videos of people explaining their second brains. No one watches these to learn. They watch to feel like they’re part of an elite class that transcends the mundane.

https://www.joanwestenberg.com/the-cult-of-hard-mode-why-simplicity-offends-tech-elites/

Keep it simple, stupid.